Why I Built a Priority Queue for Ollama

Fourteen AI agents. One GPU. Eighty-four cron jobs. All funneling through a single Ollama instance on a home server.

The GPU (a Tesla P40 with 24GB of VRAM) generates at 56 tokens per second and embeds text in 34 milliseconds. Plenty fast.

The problem wasn’t compute. It was the line.

One Card, Fourteen Agents

I run a 16-agent system on my home server: 15 specialized workers plus a coordinator, handling everything from news intelligence to commodity trading to pool coaching. The hardware is an Intel i7-8700K with 32GB RAM and a used Tesla P40. No cloud GPU. One card doing everything.

The inference stack is Ollama serving two models: Qwen3 30B-A3B (a mixture-of-experts LLM) for generation and nomic-embed-text for embeddings. Two models, fourteen agents, one dispatch queue.

The FIFO Problem

Ollama serializes all requests. One at a time. Pure FIFO: first in, first out. If you’re the only user, this is fine.

With fourteen agents and 84 cron jobs, it’s a disaster.

LLM generation requests take 40–90 seconds each. Embedding requests take ~34 milliseconds. When 2–3 LLM jobs stack in Ollama’s internal queue (which happens routinely), a 34ms embedding call gets stuck behind all of them. Waiting 80 to 270 seconds. Then it times out.

This wasn’t a theoretical edge case. Embedding timeouts were breaking my Mem0 persistent memory system, my personal knowledge base search, the pool coaching RAG pipeline, and Midas’s commodity signal ingestion. Four critical systems, all failing because a 34ms operation couldn’t cut the line ahead of 90-second LLM jobs.

I tested OLLAMA_NUM_PARALLEL=2. Made things worse. On a memory-bandwidth-bound GPU, splitting parallel requests dropped single-request throughput from 63 to 56 tok/s, a net 25% total throughput loss. Ollama’s scheduler (server/sched.go) is pure FIFO with no priority system. So I built one.

620 Lines of Stdlib Node.js

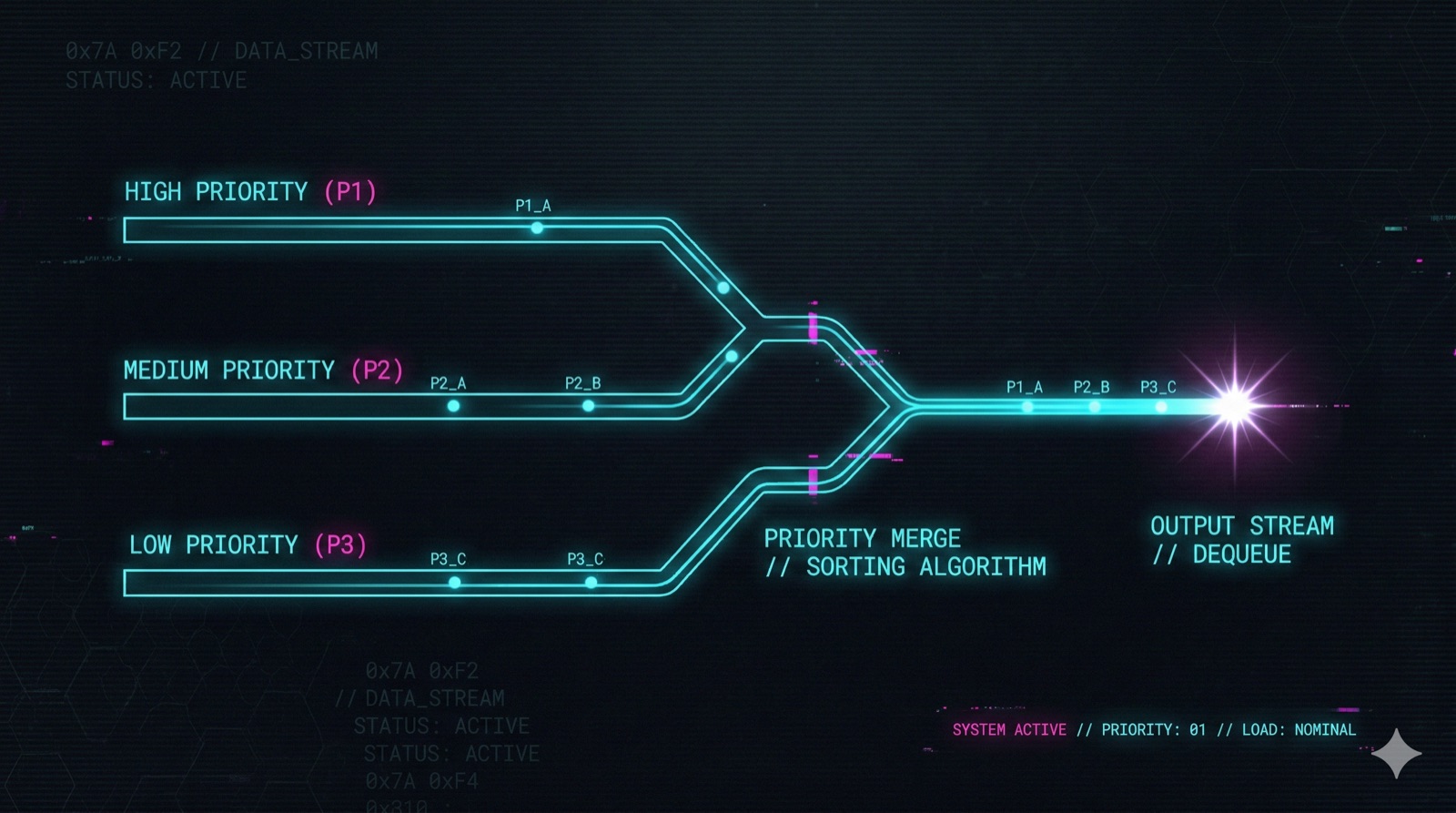

The solution is a proxy sitting between all clients and Ollama. Port 11440 in front of Ollama’s 11434. Three priority tiers:

| Priority | How It’s Classified | Typical Latency |

|---|---|---|

| CRITICAL | /api/embed and /api/embeddings, auto-detected by URL path |

~34ms |

| HIGH | X-Priority: high header |

40–90s |

| NORMAL | Everything else (default) | 40–90s |

The key design choice: embeddings are auto-classified as CRITICAL by their URL path alone. No client changes needed for the most important case. The OpenClaw gateway sends LLM requests without any priority header. Cron jobs are the bulk of traffic and should always yield to embeddings.

Passthrough endpoints bypass the queue entirely. Health checks, model listing, model info, model pulls. None of these need GPU resources, so they’re forwarded directly.

620 lines. Zero npm dependencies, just Node.js stdlib. No Redis. No external state. The queue is three arrays. The cache is a Map. If the proxy restarts, state resets, and that’s fine because the queue drains in seconds and the cache rebuilds organically.

This is boring technology doing exactly what it’s supposed to do.

The Concurrent Embed Discovery

After deploying v1, production logs revealed something I didn’t expect about Ollama’s internals.

Despite PARALLEL=1, Ollama can handle cross-model concurrent requests. I tested this empirically: nomic-embed-text (137M parameters) completes in ~35ms even while Qwen3 30B is actively generating. Only 1.8% overhead on LLM speed. The embed model is small enough that it doesn’t meaningfully compete for memory bandwidth.

This led to a concurrent embed slot, a second dispatch path in the proxy. When a CRITICAL embed arrives and the primary slot has an active LLM request, the embed fires immediately on a separate slot. 42ms during active generation, instead of waiting in line.

One constraint: embed-during-embed still serializes. Same model, same VRAM, no benefit from concurrency there.

Here’s the production reality check: the concurrent slot only fires ~1.3% of the time. In practice, embeds arrive in bursts after an LLM job completes (the agent processes its response, then fires embeds), not during generation. The feature is architecturally correct and valuable when it triggers, but most embeds benefit from the priority queue alone.

This is why I trust production logs over intuition. The optimization I was most excited about barely fires.

What Twelve Hours of Logs Taught Me

I pulled 12 hours of production data: 103 queued requests, 454 LLM chats, 1,664 embeds. Six improvements fell out of that analysis. Three are worth highlighting.

The NaN Port Bug. During a systemd restart cascade, the OLLAMA_QUEUE_TARGET environment variable briefly went undefined. parseInt(undefined) returns NaN in JavaScript. The proxy tried to forward requests to 127.0.0.1:NaN. Every request failed for 41 seconds.

Fix: parseInt(env) || 11434. One line. Prevents an entire class of restart-cascade failures. Defensive defaults aren’t glamorous, but they’re the difference between 41 seconds of downtime and zero.

The Embed Cache. Embeddings are deterministic: same model, same input, same vector, every time. Yet the system was recomputing identical embeddings on every call. The Mem0 plugin especially hit this with warmup calls and repeated searches.

In-memory LRU cache. Key is model + "\0" + input_text. Five-minute TTL, 1000 entries, ~2MB of memory. Cache hits intercept before queue entry. Zero queue wait, zero GPU time. 6ms cached vs ~35ms uncached. The boring optimization that probably saves more aggregate time than the concurrent embed slot I was so excited about.

The Silent Timeout Mismatch. Two shell scripts wrapping RAG search pipelines had hard timeout 30 limits. But embed timeouts had been bumped to 90 seconds to accommodate queue waits. The shell script would kill the Python process at 30 seconds while the embed was still legitimately waiting in queue. No error message. The search just returned nothing.

This one is a reminder that system boundaries are where bugs hide. Every timeout in a pipeline needs to respect the timeouts downstream of it.

What I Didn’t Build

Things I explicitly researched and rejected:

- Request batching. Ollama Issue #6262 shows embedding quality degrades at batch sizes above 16. Not worth the trade-off.

- Circuit breakers. Already have a watchdog agent (Bones) monitoring Ollama health via Telegram. Adding circuit breaker logic would duplicate responsibility.

- Model-aware scheduling. Only two models. Not enough diversity to justify a fair-share scheduler like ollamaMQ’s round-robin.

- Redis or external state. In-process state is fine. The queue drains in seconds. The cache rebuilds organically. Adding a dependency would be complexity without value.

Knowing what not to build is half the work. I researched four existing Ollama proxies (ollamaMQ, Olla, LLMProxy, and Ollama’s own parallel mode) before writing a line of code. None fit. They’re built for multi-GPU routing, multi-model fairness, or cloud-style health checking. My problem was simpler: priority scheduling on a single GPU with two models.

The Numbers

Before the proxy: Embeddings timing out at 80–270 seconds. Memory, search, RAG, and trading systems failing intermittently.

After: Embeddings completing in under 50ms regardless of LLM queue depth. Cached embeds in 6ms. Zero embedding timeouts.

The proxy: 620 lines. Zero dependencies. Running as a systemd service since deployment with zero crashes. 21 tests covering the NaN port defense, cache behavior, EWMA latency tracking, passthrough routing, and concurrent embed dispatch.

The GPU was never the bottleneck. Scheduling was. A 620-line proxy with no dependencies solved a problem that was breaking four critical systems, and the most impactful optimizations weren’t the clever ones. They were the boring ones I found by reading logs.

Build it. Ship it. Read the logs. Fix what the logs tell you to fix. That’s the process.

Self-hosting LLMs for multi-agent systems, or hitting similar scaling walls with Ollama? I’d love to compare notes. Reach out at architgupta941@gmail.com or find me on X.